Grammarly disables AI 'expert review' after backlash

JournalismPakistan.com | Published: 13 March 2026 | JP Global Monitoring

Join our WhatsApp channel

Grammarly has disabled its Expert Review feature after critics, and some named experts accused the AI of attributing advice to individuals without consent; reporters found instances of outdated descriptions and irrelevant source links.Summary

SAN FRANCISCO — Grammarly has disabled a controversial artificial-intelligence feature that generated writing advice attributed to well-known authors, journalists, and academics, after the tool sparked criticism and a lawsuit over the alleged misuse of real people’s identities.

The feature, known as “Expert Review,” analyzed user text and produced editing suggestions framed as guidance from specific experts. However, many of the individuals whose names appeared in the tool said they had never consented to participate and were unaware their identities were being used to lend credibility to AI-generated feedback.

AI advice attributed to real experts sparks criticism

The feature was introduced in 2025 as part of Grammarly’s broader expansion into AI-driven productivity tools. According to the company, the system generated feedback “inspired by” the published work of subject-matter experts whose writings are widely available online.

But journalists, authors, and academics quickly objected after discovering that the platform associated their names with comments appearing in documents and editing interfaces. Critics said the presentation could mislead users into believing the advice had been personally provided or endorsed by the named experts.

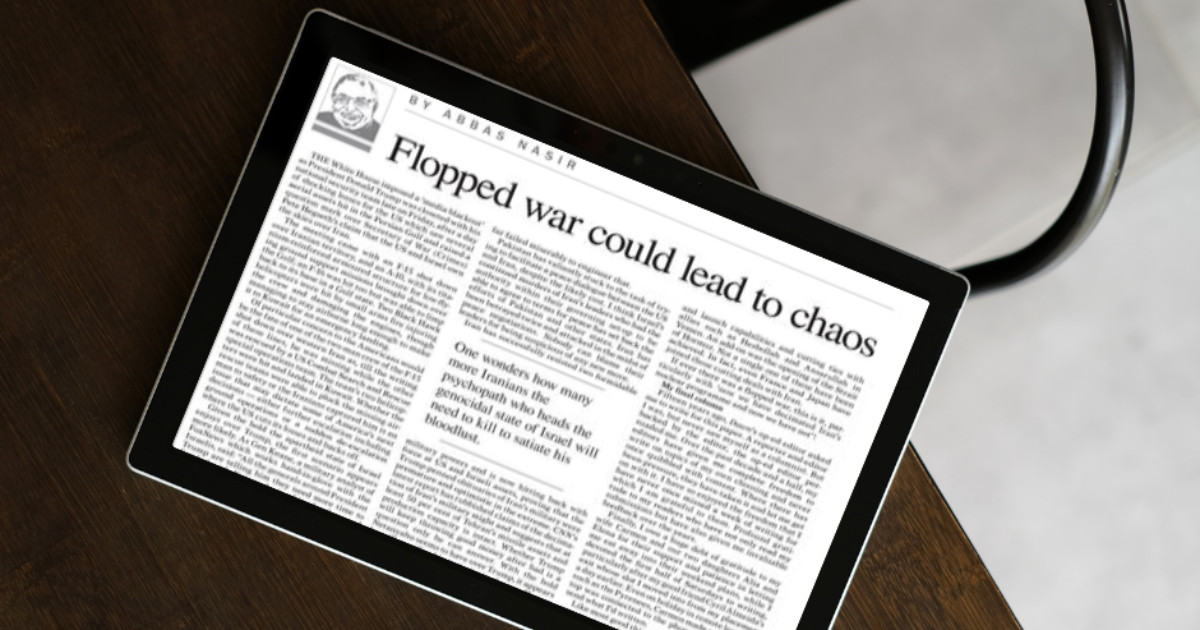

Investigations by technology reporters also found the tool sometimes attributed advice to people who had not authorized the use of their names or whose descriptions were outdated or inaccurate. In some cases, links provided as sources for the AI’s suggestions led to irrelevant or unreliable webpages.

Lawsuit filed over alleged identity misuse

The controversy escalated when investigative journalist Julia Angwin filed a proposed class action lawsuit in federal court in New York, alleging that Grammarly and its parent company, Superhuman, misappropriated the identities of journalists, authors, and editors.

The lawsuit argues that the platform used the names and reputations of real people to promote its AI product and generate profit, without their consent. Publicly available court filings say the case seeks damages and an injunction preventing further use of individuals’ identities in the system.

Following the backlash, Superhuman said it would disable the Expert Review feature while the company reassesses how expert perspectives should be incorporated into its AI writing tools.

The company apologizes and promises a redesign

In public statements, Superhuman acknowledged criticism from affected writers and said the company would redesign the feature to ensure experts have direct control over whether and how their work or identity is represented.

Company executives said the goal of the tool had been to help users access influential perspectives and scholarship relevant to their writing. However, they admitted that the implementation raised legitimate concerns about consent, attribution, and transparency.

The incident has become a prominent example of the growing legal and ethical debate surrounding generative AI systems that mimic human voices, writing styles, or identities based on publicly available material.

WHY THIS MATTERS: For Pakistani journalists and media professionals, the controversy highlights the emerging risks of AI systems replicating or attributing writing styles without permission. As AI tools increasingly enter newsrooms and editorial workflows, the episode underscores the need for stronger transparency rules, consent standards, and safeguards for journalists’ intellectual and professional identities.

ATTRIBUTION: Based on reporting by Wired (March 11, 2026) and The Verge (March 12, 2026).

PHOTO: AI-generated; for illustrative purposes only.

Key Points

- Grammarly disabled the Expert Review feature after public criticism and legal action.

- The tool generated editing suggestions framed as guidance from named authors, journalists and academics.

- Many named individuals said they had not consented to the use of their identities.

- Reporters found instances of outdated or inaccurate attributions and irrelevant source links.

- The move follows broader scrutiny of AI tools that borrow from published online work.

Key Questions & Answers

What was the Expert Review feature?

It was an AI tool that analyzed user text and produced editing suggestions presented as guidance from specific named experts.

Why did Grammarly disable the feature?

The company disabled it after criticism and a lawsuit alleging the system used real people's names without consent; reporters also found attribution errors.

Did the named experts consent to being used?

Many of the individuals whose names appeared said they had not consented and were unaware their identities were being used.

Are there legal claims against Grammarly?

Yes, the decision to disable the feature followed a lawsuit alleging misuse of real people's identities in the AI product.

Relevant Topics

Ask AI: Understand this story your way

AI EnabledDig deeper, ask anything — get instant context, background, and clarity.

Disclaimer: This feature is powered by AI and is intended to help readers explore and understand news stories more easily. While we strive for accuracy, AI-generated responses may occasionally be incomplete or reflect limitations in the underlying model. This feature does not represent the editorial views of JournalismPakistan. For our full, verified reporting, please refer to the original article.