Are newsrooms over-relying on AI? Risks deepen in 2026

JournalismPakistan.com | Published: 3 March 2026 | JP Special Report

Join our WhatsApp channel

Generative AI has shifted from experimentation to daily newsroom infrastructure, handling drafting, translation, transcription, and summaries. Editors now rely on automation for speed while wrestling with risks to editorial oversight and accuracy.Summary

ISLAMABAD — Artificial intelligence has moved from newsroom experiment to daily infrastructure in less than three years. From automated earnings summaries and sports recaps to headline testing and social media copy, generative AI tools are now embedded in editorial workflows at major publishers and smaller digital outlets alike. What began as cautious trials in 2023 has, by 2026, evolved into routine reliance.

The shift is visible across global media. In early 2023, outlets such as The Associated Press and Reuters expanded automation for corporate earnings and data-driven stories, building on earlier structured-data systems. The launch of generative tools like ChatGPT by OpenAI and competing large language models accelerated experimentation. By 2024 and 2025, publishers including Gannett and BuzzFeed publicly discussed using AI to assist with content production, personalization, and audience analytics. Industry surveys by organizations such as the Reuters Institute for the Study of Journalism have since documented growing newsroom adoption of generative AI for drafting, translation, and research support.

The question in 2026 is no longer whether AI belongs in the newsroom. The question is whether newsrooms are becoming too dependent on it.

Automation expands, editorial control tightens

AI systems now routinely assist with first drafts, background research, transcription, and translation. For multilingual markets, automated translation has reduced turnaround time for global stories. In breaking news, AI-generated summaries help editors quickly publish verified updates across digital platforms.

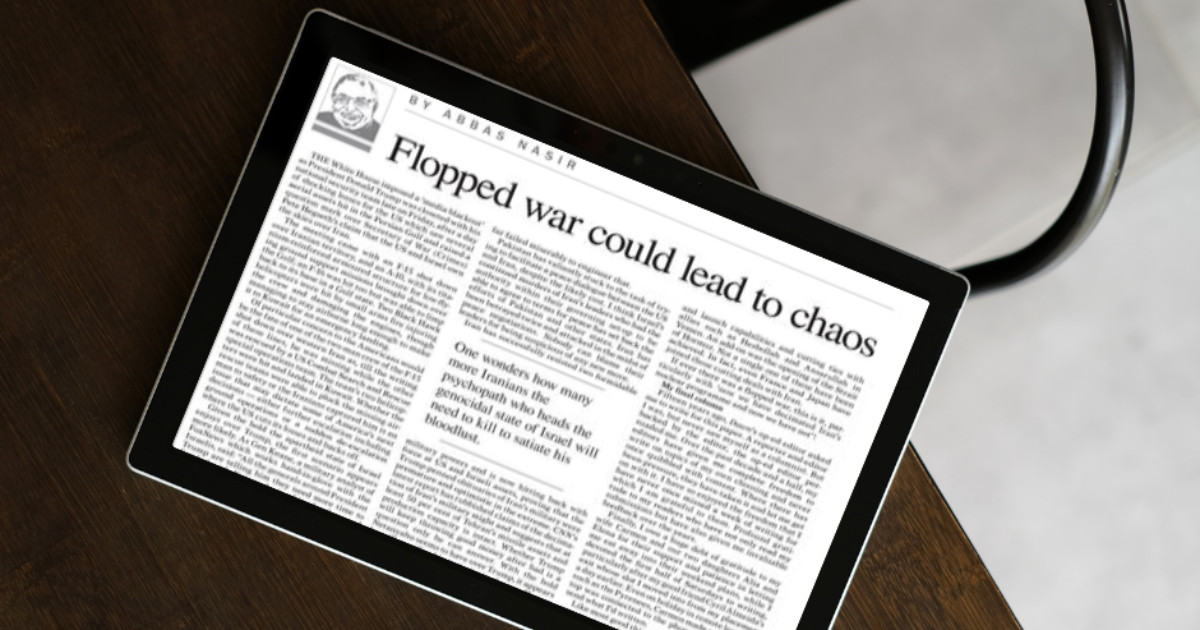

At the same time, high-profile corrections and retractions have underscored the risks of over-reliance. Several media organizations globally have faced scrutiny after AI-generated content included factual errors or misleading summaries. In response, publishers have issued clearer AI-use policies, emphasizing human oversight and mandatory verification before publication.

The Associated Press, for example, has publicly stated that automation is used primarily for structured data stories and that journalists remain responsible for oversight. Reuters has similarly emphasized that any AI-assisted content must meet its editorial standards. These guardrails suggest that responsible integration is possible. But they also highlight how easily speed can outpace scrutiny.

Accuracy under pressure

Generative AI systems are trained on vast datasets and can produce fluent, persuasive text. However, they are also prone to “hallucinations,” fabricating details or misrepresenting facts. In journalism, even minor inaccuracies can erode trust.

Trust remains fragile. The Reuters Institute’s Digital News Report 2025 found that global trust in news remains relatively low in many markets, including parts of Europe and the United States. In such an environment, visible AI errors can amplify skepticism about media credibility.

Moreover, AI-generated content can inadvertently reproduce biases embedded in training data. Without rigorous editorial review, automated summaries may frame stories in ways that distort nuance or omit critical context. For investigative reporting, where precision and legal risk are high, unverified AI output can pose reputational and legal dangers.

Cost pressures and newsroom economics

The economic case for AI is compelling. Many news organizations continue to face declining print revenues, volatile digital advertising markets, and subscription fatigue. Automation promises cost savings and efficiency gains, particularly for repetitive or data-heavy coverage.

Media layoffs have continued globally through 2024 and 2025, affecting both legacy publishers and digital startups. In this environment, AI can appear as a substitute for shrinking editorial teams. The risk is not simply technological error but structural change: fewer reporters, more automation, and thinner on-the-ground reporting.

Industry bodies such as the International Federation of Journalists have raised concerns about how automation may affect employment and working conditions. While AI can enhance productivity, replacing core reporting functions with automated systems may undermine the depth and diversity of journalism.

Audience transparency and disclosure

Another critical issue is disclosure. Surveys indicate that audiences want transparency when AI tools are used in content production. Some publishers have begun labeling AI-assisted articles or publishing newsroom guidelines outlining how generative tools are deployed.

Transparency can mitigate backlash. When readers understand that AI is used for drafting or data processing, with human editors retaining final responsibility, trust may be preserved. Conversely, undisclosed AI use can trigger reputational damage if errors emerge.

Regulatory scrutiny is also increasing. The European Union’s AI Act, approved in 2024, introduced risk-based obligations for certain AI applications. While journalism is not directly restricted, compliance obligations for AI developers and deployers are reshaping how technology companies and media organizations manage risk. In the United States and other jurisdictions, policymakers continue to debate AI governance, including copyright and accountability issues that affect publishers.

Balancing innovation with judgment

The core challenge is not whether to use AI, but how to integrate it without weakening editorial judgment. AI excels at pattern recognition, data summarization, and language generation. It does not independently verify sources, cultivate whistleblowers, or exercise ethical reasoning.

Newsrooms that treat AI as an assistant rather than an author appear better positioned. Structured use cases such as financial reports, sports statistics, and weather updates have long been automated with relatively low risk. Generative AI can extend these efficiencies, but high-stakes reporting demands caution.

Training is equally important. Journalists need to understand both the capabilities and limitations of AI tools. Without literacy in how models generate text and where they can fail, editorial oversight becomes superficial.

For smaller newsrooms, especially in emerging markets, the temptation to rely heavily on free or low-cost AI tools is significant. Yet these same organizations may lack legal resources or dedicated fact-checking teams to catch errors. The result can be uneven standards and reputational vulnerability.

WHY THIS MATTERS: For Pakistani newsrooms operating under financial constraints and intense political scrutiny, AI offers real efficiency gains. However, over-reliance without strict verification protocols could compound credibility challenges in an already polarized media environment. Clear AI-use policies, mandatory human oversight, and transparent disclosure can help Pakistani media organizations harness innovation without undermining trust. Investing in training journalists to critically evaluate AI outputs may prove as important as investing in the tools themselves.

ATTRIBUTION: This analysis draws on publicly available policies and statements from The Associated Press and Reuters, findings from the Reuters Institute Digital News Report 2025, and publicly documented developments related to the European Union AI Act and industry discussions by the International Federation of Journalists.

PHOTO: AI-generated; for illustrative purposes only.

Key Points

- Generative AI moved from trial to routine newsroom use between 2023 and 2026.

- Tools assist with first drafts, transcription, translation, headline testing and social copy.

- Publishers report gains in speed and scale but face increased editorial and verification risks.

- Industry surveys document widespread adoption for drafting, research support and analytics.

- Newsrooms are tightening editorial controls as automation expands, especially during breaking news.

Key Questions & Answers

Is AI now commonly used in newsrooms?

Yes, in 2026, many publishers integrate generative AI into daily workflows for drafting, translation, and audience analytics.

Are newsrooms over-relying on AI?

Concerns have risen that dependence on automation can weaken editorial oversight and verification, though practices vary by outlet.

What are the main risks associated with heavier AI use?

Key risks include reduced fact-checking, errors in automated content, bias in outputs, and challenges in attribution and accountability.

How can newsrooms mitigate these risks?

Measures include stronger editorial review, clear disclosure of AI use, rigorous fact-checking protocols, and ongoing staff training.

Ask AI: Understand this story your way

AI EnabledDig deeper, ask anything — get instant context, background, and clarity.

Disclaimer: This feature is powered by AI and is intended to help readers explore and understand news stories more easily. While we strive for accuracy, AI-generated responses may occasionally be incomplete or reflect limitations in the underlying model. This feature does not represent the editorial views of JournalismPakistan. For our full, verified reporting, please refer to the original article.