AI deepfakes surge ahead of 2026 elections

JournalismPakistan.com | Published: 25 February 2026 | JP Special Report

Join our WhatsApp channel

AI deepfakes and synthetic political clips are increasing globally ahead of 2026 elections, prompting platform removals and warnings from election observers. Researchers and media groups say the surge strains verification systems and public trust.Summary

ISLAMABAD— Artificial intelligence-generated deepfakes and synthetic news clips are escalating across multiple countries heading into key elections in 2026, prompting renewed warnings from election monitors, technology researchers, and media organizations about the impact on public trust and newsroom verification systems.

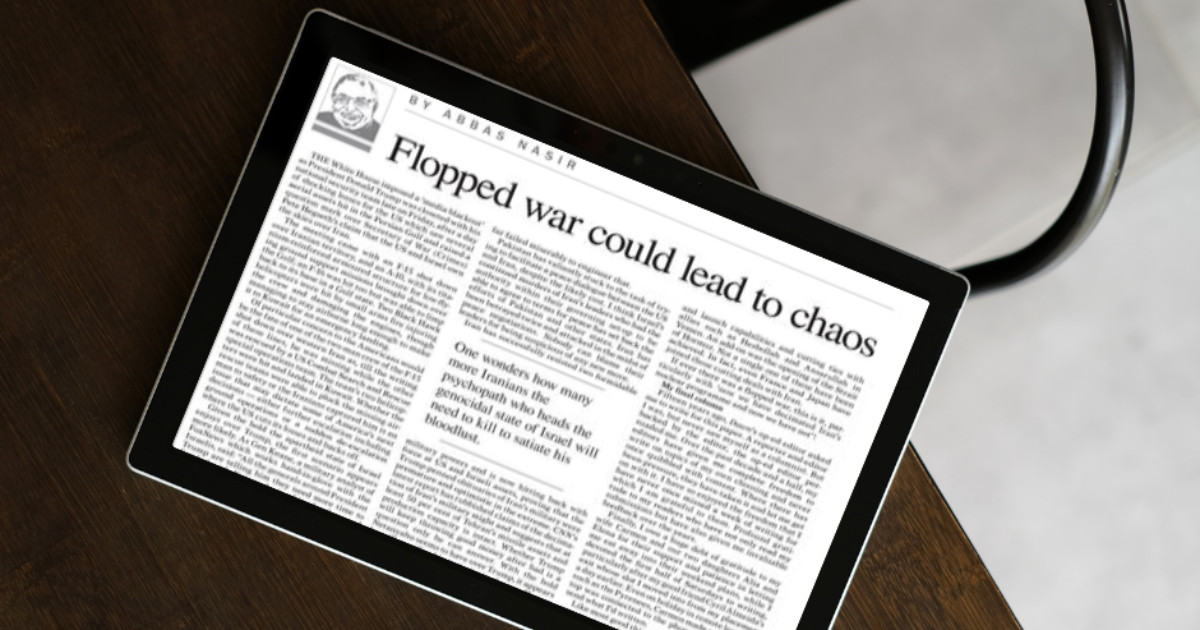

Research published over the past year by organizations such as the World Economic Forum has identified AI-driven misinformation as one of the most significant global risks in the short term, particularly during election cycles. Separately, technology firms such as Meta and Google have reported removing networks and accounts that distribute manipulated or AI-generated political content that violates platform policies, underscoring the scale of the challenge.

Rising use of synthetic political content

In the United States, which will hold midterm elections in November 2026, academic institutions and digital forensics groups have documented an increase in AI-generated audio clips and manipulated videos falsely depicting candidates making inflammatory statements. Similar concerns have been raised in India and parts of Europe, where national or regional votes are scheduled for 2026.

The nonprofit organization Freedom House warned in its most recent Freedom on the Net report that AI tools are making it cheaper and faster to generate deceptive political content at scale. While the report does not focus exclusively on 2026, it notes a clear upward trend in the use of generative AI in political manipulation campaigns globally.

Newsrooms overhaul verification workflows

In response, major international media organizations, including The New York Times and the BBC, have expanded internal protocols for verifying user-generated content. These measures include mandatory reverse image searches, metadata analysis, forensic audio checks, and the use of specialized AI-detection tools before publishing politically sensitive material sourced from social platforms.

The BBC has publicly detailed its investment in disinformation and social media investigation teams to handle manipulated content during election cycles. Similarly, Reuters has enhanced its fact-checking operations through Reuters Fact Check, focusing on viral election-related claims and synthetic media.

Technology companies have also introduced labeling policies for AI-generated political ads. Google requires disclosure when political advertisements contain digitally altered or synthetic content, while Meta has implemented policies mandating advertisers to disclose the use of AI-generated imagery or audio in political messaging.

Erosion of public trust and regulatory response

Public trust implications remain central to the debate. Surveys conducted by the Reuters Institute for the Study of Journalism at the University of Oxford show that trust in news has declined in several markets over recent years, even before the widespread use of generative AI tools. Experts warn that the normalization of deepfakes could deepen skepticism, as audiences may begin doubting authentic reporting alongside fabricated content.

Regulators are also responding. The European Union’s Digital Services Act, which imposes due diligence obligations on large online platforms to mitigate systemic risks, including disinformation, is being closely watched as elections approach in several EU member states in 2026. Enforcement actions under the Act are ongoing, with the European Commission investigating compliance by major platforms.

Media analysts caution that while generative AI itself is not new to election cycles, the accessibility of consumer-grade tools has lowered the barrier to entry. Tools capable of producing realistic voice clones or video avatars are now widely available, increasing the likelihood of localized and short-lived disinformation bursts that can spread before being debunked.

WHY THIS MATTERS: Pakistani newsrooms have already faced coordinated disinformation campaigns during election periods. As generative AI tools become more accessible globally, Pakistani journalists and editors may need to formalize AI-detection protocols, invest in verification training, and develop public-facing transparency strategies to maintain credibility during future electoral cycles.

ATTRIBUTION: This report is based on publicly available research and reports from the World Economic Forum, Freedom House, the Reuters Institute for the Study of Journalism at the University of Oxford, the European Commission, and policy disclosures by Meta, Google, the BBC, Reuters, and The New York Times.

PHOTO: AI-generated; for illustrative purposes only.

Key Points

- AI deepfakes and synthetic clips are rising globally ahead of the 2026 election cycle.

- Platforms such as Meta and Google report removing networks that distribute manipulated political content.

- Observers and researchers warn the trend threatens public trust and election integrity.

- Newsrooms and digital forensics teams are updating verification workflows to detect synthetic media.

- Organizations including Freedom House and the World Economic Forum flag generative AI as a growing information risk.

Key Questions & Answers

What are AI deepfakes?

Deepfakes are synthetic audio or video created using AI to realistically impersonate people; they can fabricate statements or events for deceptive purposes.

What actions are platforms taking?

Companies such as Meta and Google report removing networks and accounts that distribute manipulated political content and enforcing policy violations.

How might deepfakes affect the 2026 elections?

They can erode public trust and complicate voter information environments, increasing pressure on verification systems and election monitors.

How are newsrooms responding?

Newsrooms and digital forensics groups are overhauling verification workflows, adopting new tools and cross-checking practices to detect synthetic media.

Ask AI: Understand this story your way

AI EnabledDig deeper, ask anything — get instant context, background, and clarity.

Disclaimer: This feature is powered by AI and is intended to help readers explore and understand news stories more easily. While we strive for accuracy, AI-generated responses may occasionally be incomplete or reflect limitations in the underlying model. This feature does not represent the editorial views of JournalismPakistan. For our full, verified reporting, please refer to the original article.