India tightens tech platform rules with 3‑hour content takedown

JournalismPakistan.com | Published: 18 February 2026 | JP Asia Desk

Join our WhatsApp channel

India has tightened rules for tech platforms, mandating the removal of unlawful content within three hours under amended intermediary guidelines; officials warned of tougher measures to curb AI deepfakes and said platforms must respect Indian laws and norms.Summary

NEW DELHI — India’s Information and Technology Minister Ashwini Vaishnaw said on February 17 that global technology platforms, including YouTube, Meta, X, and Netflix, must operate strictly within the Indian constitutional and legal framework, a statement that comes shortly after New Delhi enacted tougher rules requiring much faster removal of unlawful content. He made the remarks on the sidelines of the India AI Impact Summit 2026, where he also stressed the growing need for stronger regulation of AI‑generated deepfakes and harmful content.

India’s tightened content moderation rules, notified last week under amended Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, mandate that social media companies comply with takedown orders for unlawful posts within three hours of notification, a sharp reduction from the previous 36‑hour window. Vaishnaw brushed aside industry executives' concerns about the operational feasibility of such compressed timelines, saying that major tech companies have the technical capacity to act even faster.

Moderation pressures grow as deepfakes spread

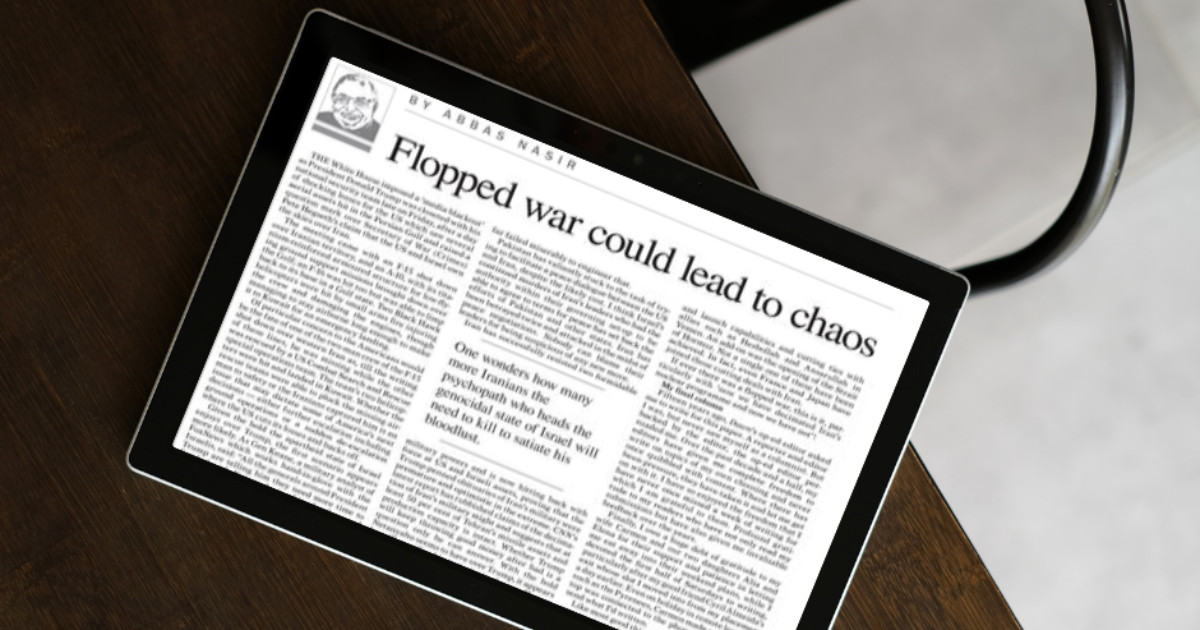

In his comments, the minister underscored that platforms must respect not only Indian law but also the country’s cultural context, noting that failure to do so undermines public trust in digital spaces. He highlighted the accelerating problem of deepfakes and synthetic media, saying India is exploring much stronger regulation and holding ongoing dialogues with technology companies and other nations to tackle the issue.

Critics of the new regime argue that accurately determining what constitutes unlawful content within such brief windows is logistically challenging and could lead to over‑reliance on automated systems. Platforms, including Meta, have publicly flagged operational concerns with the three‑hour mandate, saying it strains their moderation processes.

Strengthening child safety and AI content accountability

India is also considering age‑based restrictions and enhanced safeguards for children’s online safety, and has pushed for clearer labelling and provenance requirements for AI‑generated and manipulated content, a move aligned with global efforts to curb harmful deepfakes and safeguard digital information integrity.

Analysts say India’s regulatory shift puts it among a growing number of countries demanding greater accountability from global tech firms on content moderation and AI governance, amid rising concerns about misinformation, digital harms, and the erosion of democratic norms by synthetic media.

WHY THIS MATTERS: Pakistan’s media sector faces similar pressures around digital misinformation and platform governance. Journalists and news organizations should track how accelerated takedown requirements and deepfake safeguards might reshape content distribution, moderation practices, and legal compliance for global platforms operating in South Asia’s diverse regulatory environments.

ATTRIBUTION: Reporting based on verified coverage from Reuters, Moneycontrol, The Tribune, and other credible sources.

PHOTO: AI‑generated; for illustrative purposes only.

Key Points

- New rules require social media companies to remove unlawful content within three hours of notification, down from 36 hours.

- Rules apply to major global platforms such as YouTube, Meta, X and Netflix.

- Information and Technology Minister Ashwini Vaishnaw said platforms must follow Indian law and cultural context.

- Government signalled tougher regulation to address AI-generated deepfakes and synthetic media.

- Industry concerns about the operational feasibility were dismissed; minister said major firms have the technical capacity.

Key Questions & Answers

What is the new takedown timeline?

Platforms must comply with takedown orders for unlawful posts within three hours of notification, reduced from 36 hours.

Which companies does the rule affect?

The rules apply to major social media and streaming platforms operating in India, including global tech firms such as YouTube, Meta, X and Netflix.

Who announced the changes?

Information and Technology Minister Ashwini Vaishnaw spoke about the rules and related measures on the sidelines of the India AI Impact Summit 2026.

How is India responding to deepfakes?

Officials said India is exploring stronger regulation of AI-generated deepfakes and is engaging with companies and other countries to address the issue.

Relevant Topics

Ask AI: Understand this story your way

AI EnabledDig deeper, ask anything — get instant context, background, and clarity.

Disclaimer: This feature is powered by AI and is intended to help readers explore and understand news stories more easily. While we strive for accuracy, AI-generated responses may occasionally be incomplete or reflect limitations in the underlying model. This feature does not represent the editorial views of JournalismPakistan. For our full, verified reporting, please refer to the original article.